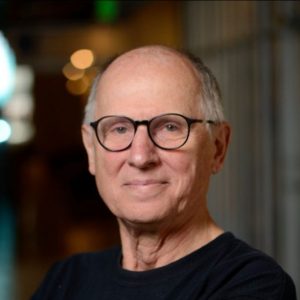

Ondřej Klejch is a senior researcher in the Centre for Speech Technology Research in the School of Informatics at the University of Edinburgh. He obtained his Ph.D. from the University of Edinburgh in 2020 and received his M.Sc. and B.Sc. from Charles University in Prague. He has been working on building automatic speech recognition systems with limited training data and supervision within several large projects funded by EPSRC, H2020, and IARPA. His recent work investigated semi-supervised and unsupervised training methods for automatic speech recognition in low-resource languages.

Ondřej Klejch is a senior researcher in the Centre for Speech Technology Research in the School of Informatics at the University of Edinburgh. He obtained his Ph.D. from the University of Edinburgh in 2020 and received his M.Sc. and B.Sc. from Charles University in Prague. He has been working on building automatic speech recognition systems with limited training data and supervision within several large projects funded by EPSRC, H2020, and IARPA. His recent work investigated semi-supervised and unsupervised training methods for automatic speech recognition in low-resource languages.

Deciphering Speech: a Zero-Resource Approach to Cross-Lingual Transfer in ASR

Automatic speech recognition technology has achieved outstanding performance in recent years. This progress has been possible thanks to the advancements in deep learning and the availability of large training datasets. The production models are typically trained on thousands of hours of manually transcribed speech recordings to achieve the best possible accuracy. Unfortunately, due to the expensive and time-consuming manual annotation process, automatic speech recognition is available only for a fraction of all languages and their speakers.

In this talk, I will describe methods we have successfully used to improve the language coverage of automatic speech recognition. I will describe semi-supervised training approaches for building systems with only a few hours of manually transcribed training data and large amounts of crawled audio and text. Subsequently, I will discuss training dynamics of semi-supervised training approaches and why a good language model is necessary for their success. I will then present a novel decipherment approach for training an automatic speech recognition system for a new language without any manually transcribed data. This method can “decipher” speech in a new language using as little as 20 minutes of audio and paves the way for providing automatic speech recognition in many more languages in the future. Finally, I will talk about open challenges when training and evaluating automatic-speech-recognition models for low-resource languages.

His talk takes place on Thursday, December 14, 2023 at 14:00 in A113.